AI-ACCELERATED LEARNING

15 SRC

AI-Accelerated Learning

AI-accelerated learning operates at multiple levels: large-context tools like NotebookLM enable radically compressed learning cycles via structured prompt sequences (mental models, expert disagreements, test questions). Prompting techniques like Socratic prompting (asking questions instead of giving directives) improve output quality by activating deeper reasoning. Domain-specific prompt libraries for market research, consulting, and competitive intelligence are becoming a key productivity unlock. AI can now generate consulting-grade deliverables (McKinsey-style slides with data visualizations) from detailed prompts, democratizing analysis that previously required expensive professional services. Open-source repos on GitHub are becoming the primary educational institution for AI practitioners, with community engagement (stars) acting as a quality filter that traditional credentials cannot match. The newest frontier is the self-improving knowledge base and agent: a cheap weekend setup (folder structure + schema file) becomes a compounding company asset when query answers are fed back into the corpus and monthly health checks flag contradictions and gaps, while local tools like claude-smart turn repeated mistakes into explicit reusable learnings — the system audits and teaches itself through use. The same applied-loop logic is reshaping how skills are learned and hired for: AI prep routines before meetings replace flying blind, and OpenAI's Forward Deployed Engineer interview tests practicing "the actual loop" of real-world implementation over algorithmic puzzles.

Guides

The AI-Accelerated Learning Playbook: From NotebookLM to Consulting-Grade Deliverables

Structured prompt sequences that compress years of domain expertise into hours — the 3-prompt learning sequence, Socratic prompting, domain-specific prompt libraries, and how to generate McKinsey-style analysis without the consulting firm.

AI-Native GTM Engineering: From Enrichment Pipelines to $25 CPLs

How B2B growth teams are replacing manual prospecting with technical GTM systems — covering Clay Ads enrichment-powered targeting, AI competitive intelligence that extracts pricing and roadmap signals from public data, and the LinkedIn content strategies that compound organic reach.

Insights

- Asking an LLM for "the 5 core mental models experts share" extracts structural knowledge rather than surface summaries -- it targets the frameworks that take years of domain experience to internalize (from notebooklm accelerated learning)

- The three-prompt learning sequence -- (1) core mental models, (2) fundamental expert disagreements, (3) deep-understanding test questions -- maps an entire field's intellectual landscape in minutes (from notebooklm accelerated learning)

- Uploading massive context (6 textbooks, 15 papers, all lecture transcripts) before querying gives the model enough material to identify cross-source patterns rather than echoing a single author's perspective (from notebooklm accelerated learning)

- Using AI-generated "deep understanding vs. memorization" questions as a self-test forces active recall against the hardest conceptual gaps (from notebooklm accelerated learning)

- The error-driven follow-up loop -- "explain why this is wrong and what I'm missing" after each wrong answer -- turns mistakes into targeted micro-lessons, compressing the feedback cycle from weeks to minutes (from notebooklm accelerated learning)

- Training AI skills on curated reference assets (e.g., from a copywriting resource site) dramatically improves output quality versus generic prompting -- the pattern is encoding domain knowledge into reusable AI configurations rather than relying on zero-shot generation (from ai copywriting skill training)

- A compounding learning loop forms when AI query answers are saved back into the raw/ knowledge folder, so each subsequent answer is better informed than the last -- the knowledge base improves itself through use (from claude personal knowledge base workflow)

- 45 minutes of weekend setup (folder structure + CLAUDE.md schema) becomes a continuously improving company asset through consistent usage and feeding results back -- low upfront cost, compounding return (from claude personal knowledge base workflow)

- Monthly knowledge-base health checks -- asking the AI to flag contradictions, surface unexplored topics, and suggest articles to fill gaps -- turn a static archive into a self-auditing learning system (from claude personal knowledge base workflow)

- claude-smart makes agent learning explicit: it converts session mistakes into reusable, actionable rules across projects, such as learning to avoid a repo's hanging test watch mode, rather than merely remembering that the mistake happened (from claude smart self improving plugin)

- Running an AI prep prompt before 1:1s converts "flying blind" into structured conversations: a 5-minute routine replaces agenda-glancing, surfaces what might be missed, and creates more intentional dialogue (from ai prep one on one meetings)

- OpenAI's Forward Deployed Engineer interview deliberately avoids LeetCode-style problems and tests practicing "the actual loop" of real-world implementation -- signaling that learning the applied workflow now matters more than rehearsing algorithmic puzzles (from openai forward deployed engineer interview process)

LLM Input Optimization

LLMs natively speak Markdown (trained on vast amounts of it) -- converting files to Markdown before feeding to LLMs gets better extraction, reasoning, and token efficiency than raw text or HTML (from markitdown microsoft file converter)

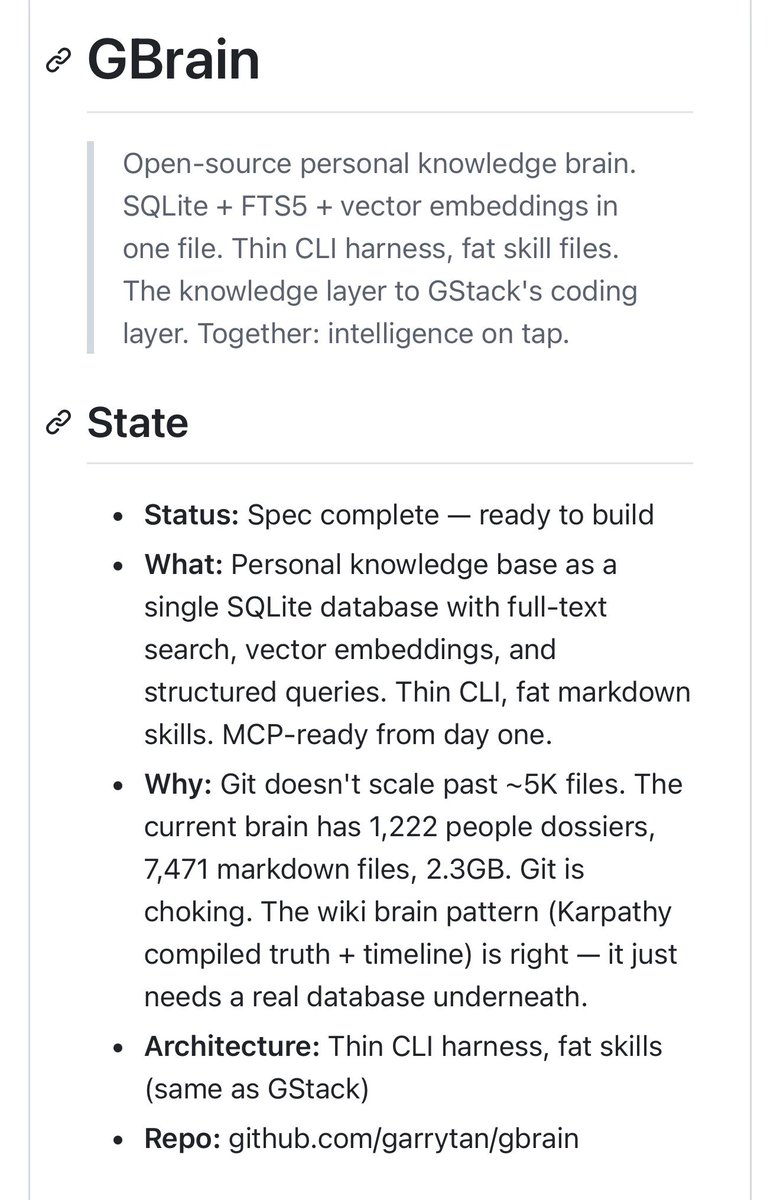

Karpathy-style git wikis for knowledge bases can grow to multi-gigabyte sizes (2.3GB+), at which point git's 5GB limit forces a migration to SQLite — plan for database backends early in long-running knowledge projects (from garry tan openclaw git wiki gstack)

NotebookLM podcast generation combined with a .md analysis file creates a dual-format learning artifact: audio for passive consumption and structured markdown for cross-LLM integration and further querying (from notebook lm podcast markdown analysis)

Prompting Techniques

- "Socratic prompting" -- asking AI questions instead of giving it directives -- is claimed to significantly improve output quality by forcing the model to reason through the problem rather than pattern-match to a response (from socratic prompting technique)

- The technique inverts the typical prompt paradigm: instead of instructing the model, you guide it through questions that may activate deeper reasoning chains (from socratic prompting technique)

- Prompt engineering for domain expertise continues to gain traction -- users want specific, structured prompts tailored to professional workflows (market research, consulting, competitive intel) rather than generic AI interactions (from claude market research prompts)

Research and Analysis

- Claude is being positioned as a market research tool competitive with consulting-grade analysis, with users reverse-engineering prompt strategies from McKinsey and investment bank workflows (from claude market research prompts)

- AI-generated consulting-grade deliverables (McKinsey/BCG-style slides with complex data visualizations) are becoming accessible to individuals, with Kimi generating professional presentations directly from detailed prompts (from kimi mckinsey slide prompt)

- The prompt engineering pattern for high-quality slide generation requires specifying three layers: content structure (frameworks, data types), visual style (typography, color palette), and layout density (from kimi mckinsey slide prompt)

Open-Source Learning

- "GitHub is the new Harvard" frames open-source repos as the primary educational institution for AI practitioners -- credentials matter less than demonstrated learning from public codebases (from most starred ai repos)

- High-star AI repos on GitHub represent a curated, community-validated curriculum -- the engagement signal (stars) acts as a quality filter that traditional education lacks (from most starred ai repos)

Why Coding/Math/Research Outpace General-Use

Karpathy: the dramatic AI improvements in coding, math, and research come from two properties — these domains offer verifiable rewards (unit tests passed yes/no) for RL, and they concentrate B2B value that justifies prioritization; the goldmines drive the focus (from ai capability gap coding vs general use)

The free-tier impression of AI is structurally misleading: free/old/deprecated chat models don't reflect $200/month frontier agentic capability — Codex sessions running 1+ hours can restructure entire codebases or find system vulnerabilities (from ai capability gap coding vs general use)

Visual Training Data for Agents

- Refero's 2,000 DESIGN.md files (colors, typography, spacing, layout patterns from top products) directly address why AI agents make ugly UIs: they've never seen good design — exposure-as-training, packaged for in-context learning rather than fine-tuning (from refero design systems ai agents)

Voices

24 contributors

Garry Tan

@garrytan

President & CEO @ycombinator —Founder https://t.co/7aoJjp1iIK—designer/engineer who helps founders—SF Dem accelerating the boom loop—haters not allowed in my sauna

Andrej Karpathy

@karpathy

I like to train large deep neural nets. Previously Director of AI @ Tesla, founding team @ OpenAI, PhD @ Stanford.

Cody Schneider

@codyschneiderxx

folllow for shiposting about the growth tactics i'm using to grow my startup building @graphed with @maxchehab Get Started Free - https://t.co/stXlkQBlSj

Ihtesham Ali

@ihtesham2005

investor, writer, educator, and a dragon ball fan 🐉

Sukh Sroay

@sukh_saroy

Sharing daily insights on AI, No Code, & Tech Tools • Follow me to master AI to level up your life • DM for Collabs

Mayank Vora

@aiwithmayank

AI doesn’t have to be complicated - I’m here to show you how to actually use it and break down the latest trends in AI and Tech.

Corey Ganim

@coreyganim

Simplifying AI for non-technical entrepreneurs. Built an eCom biz to $16M+ revenue. Built (then sold) a coaching biz to $340k ARR.

Dave Kline

@dklineii

Become the Leader You’d Follow | Founder @ MGMT | CEO Coach | Advisor | Speaker | Trusted by 300K+ leaders. | Work with us: https://t.co/6P5ZGqxCyc

Kevin Rose

@kevinrose

building all the things | Chairman @digg, Advisor @trueventures | Podcast: Random Show w/ @tferriss. | Ex: @google, Board of Directors: @ouraring, @hodinkee

Max Blade

@_MaxBlade

🔥 "QuickClaw" - OpenClaw on your iphone https://t.co/u5NW0xhOZD 📺 YT -https://t.co/lrpw6a0FQQ

Aniket Panjwani

@aniketapanjwani

I teach agentic coding to economists || PhD Economics Northwestern || Director of AI/ML @ Payslice || ex-MLOps @ Zelle

Mike Bespalov

@bbssppllvv

Making AI design not suck. Founder of @referodesign (250K+ users). Ex- @clerk

Crystal

@crystalsssup

@Kimi_Moonshot Staff. UCSD Alum. Personality goes a long way. Be useful.

Eth

@EtherCoins

Bitcoin evangelist | Alts observer | Blockchain enthusiast since 2013 | AI visuals & engineering | Fitness adept | Financial analysis =/= advice

Machina

@EXM7777

running ai-powered agencies | https://t.co/fMOmHWBgHG

Griffin Hilly

@GriffinHilly

Bond trader by day, pro-humanism/nuclear on my own time. @MadiHilly’s biggest fan

Hasan Toor

@hasantoxr

AI & Tech Educator • Sharing insights & practical ways to use AI & Tech Tools for you & your daily business

Rimsha Bhardwaj

@heyrimsha

Helping you master AI daily with step by step AI guides, & practical tools • AI Educator & Writer • DM for Collab

rahul

@rahulgs

head of applied ai @ ramp

Shrey Pandya

@shreypandya

building @browserbase // @neo, @v1michigan 〽️

Tech with Mak

@techNmak

AI, coding, software, and whatever’s on my mind.

Teknium 🪽

@Teknium

Cofounder and Lead Engineer - Hermes Agent @NousResearch, prev @StabilityAI Github: https://t.co/LZwHTUFwPq HuggingFace: https://t.co/sN2FFU8PVE

PULKIT WALIA

@WaliaPulkit

Rebuilding FDE Hiring | Founding team @ Urban Company (Seed → $3B IPO) | HBS

Yi Lu

@yyyiiillluuu

TL for Meta AI personalization and agent memory (Reality lab) Former head of ML at Forethought (acquired by Zendesk) Adjunct prof University of Washington